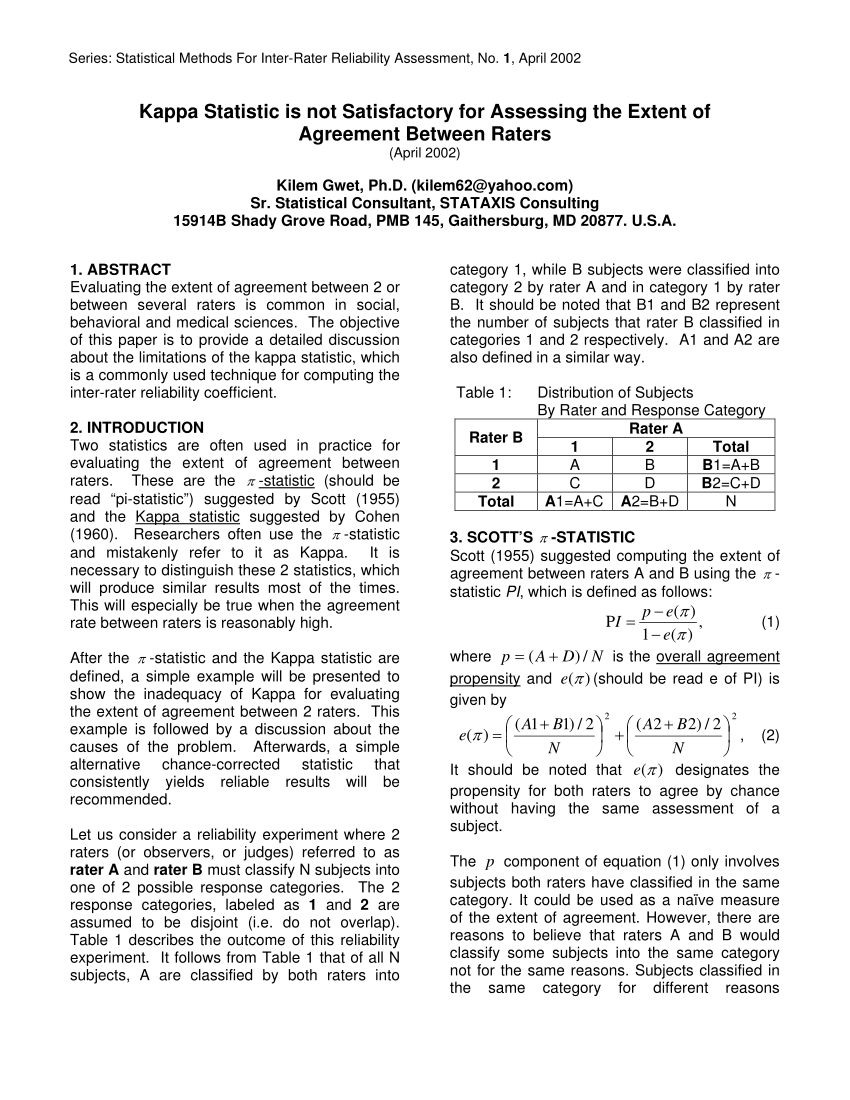

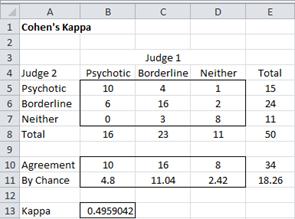

Agree or Disagree? A Demonstration of An Alternative Statistic to Cohen's Kappa for Measuring the Extent and Reliability of Ag

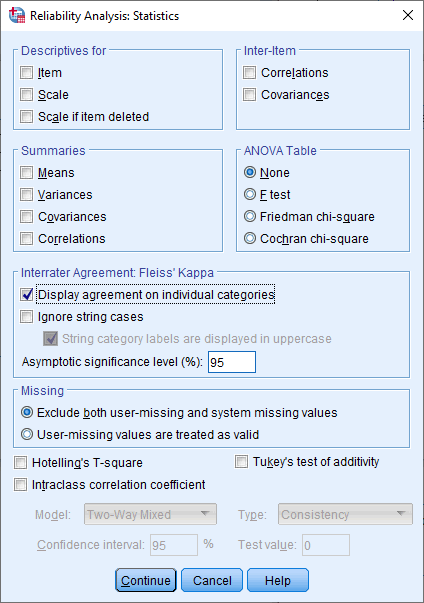

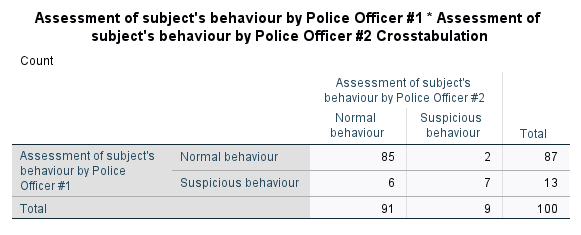

Cohen's kappa in SPSS Statistics - Procedure, output and interpretation of the output using a relevant example | Laerd Statistics

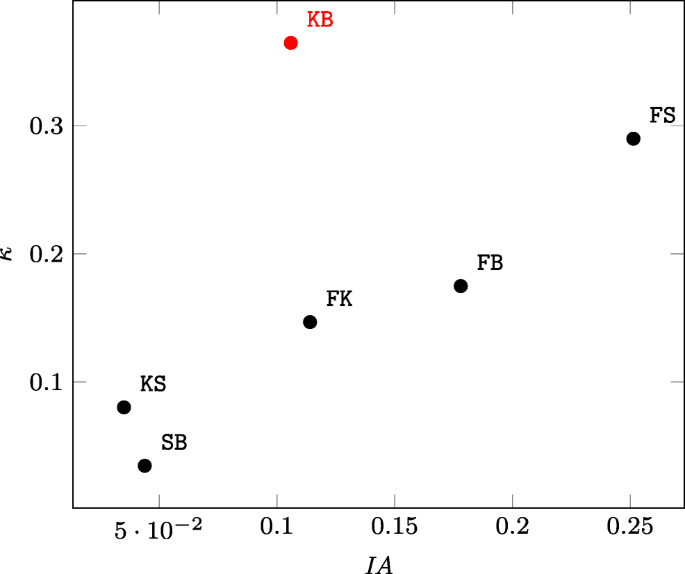

An Evaluation of Interrater Reliability Measures on Binary Tasks Using <i>d-Prime</i>. - Abstract - Europe PMC

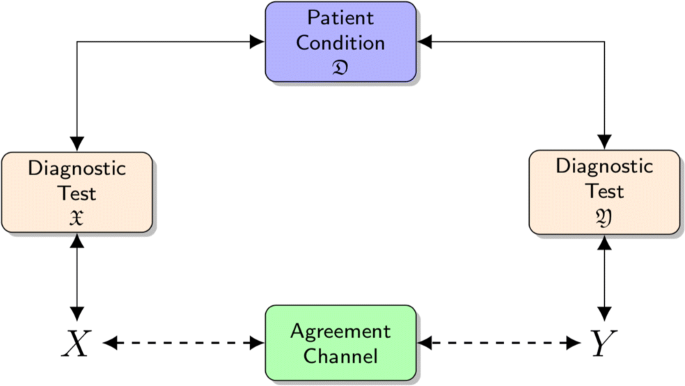

Beyond kappa: an informational index for diagnostic agreement in dichotomous and multivalue ordered-categorical ratings | SpringerLink

Inter-Annotator Agreement (IAA). Pair-wise Cohen kappa and group Fleiss'… | by Louis de Bruijn | Towards Data Science

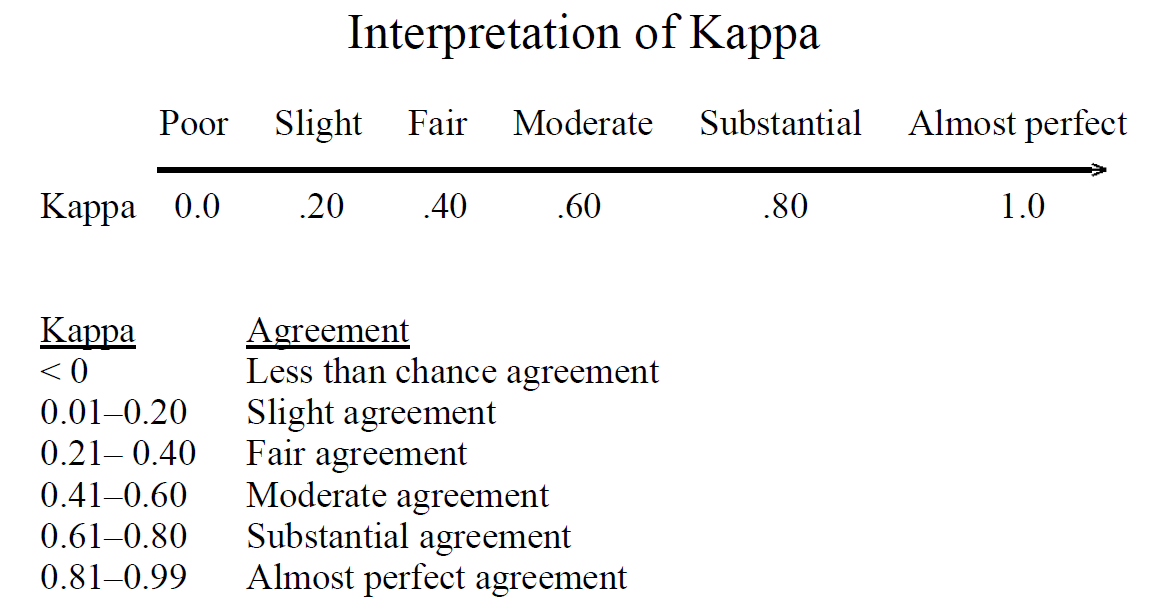

Interpretation of Kappa Values. The kappa statistic is frequently used… | by Yingting Sherry Chen | Towards Data Science

Beyond kappa: an informational index for diagnostic agreement in dichotomous and multivalue ordered-categorical ratings | SpringerLink

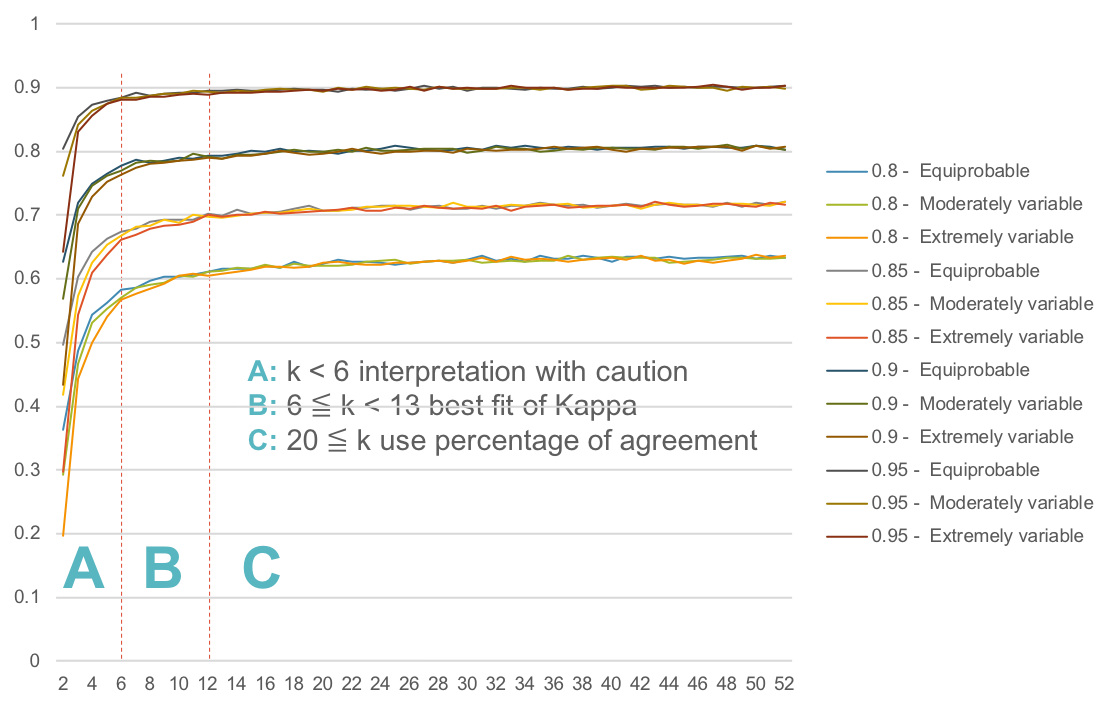

242-2009: More than Just the Kappa Coefficient: A Program to Fully Characterize Inter-Rater Reliability between Two Raters

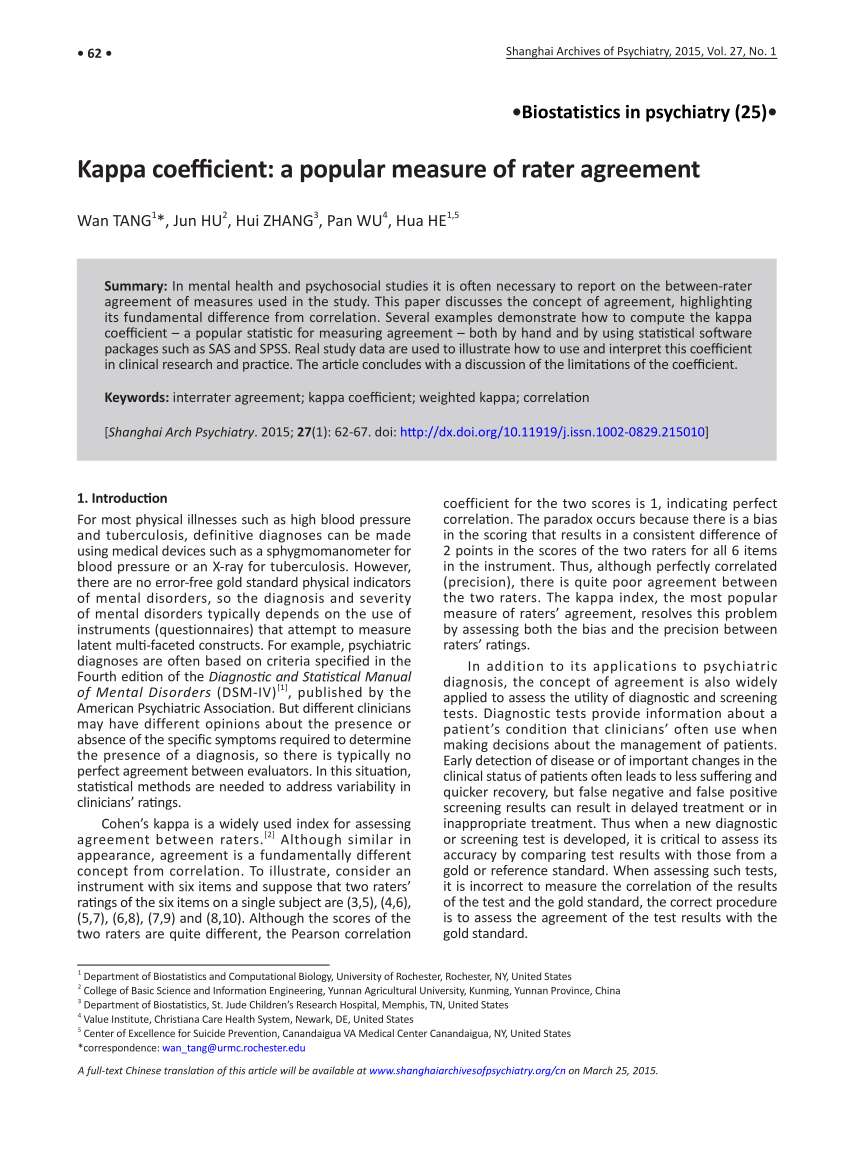

The kappa coefficient of agreement. This equation measures the fraction... | Download Scientific Diagram